Aloception

Aloception is a Software Vision Sensor providing the essential camera-based solution to address the challenges of the future of mobility.

Embedded on a stereo camera, Aloception detects essential components of a scene necessary for the navigation of the robot. It provides 3D scene understanding in a Bird’s-Eye View (BEV) for autonomous and/or assisted mobility.

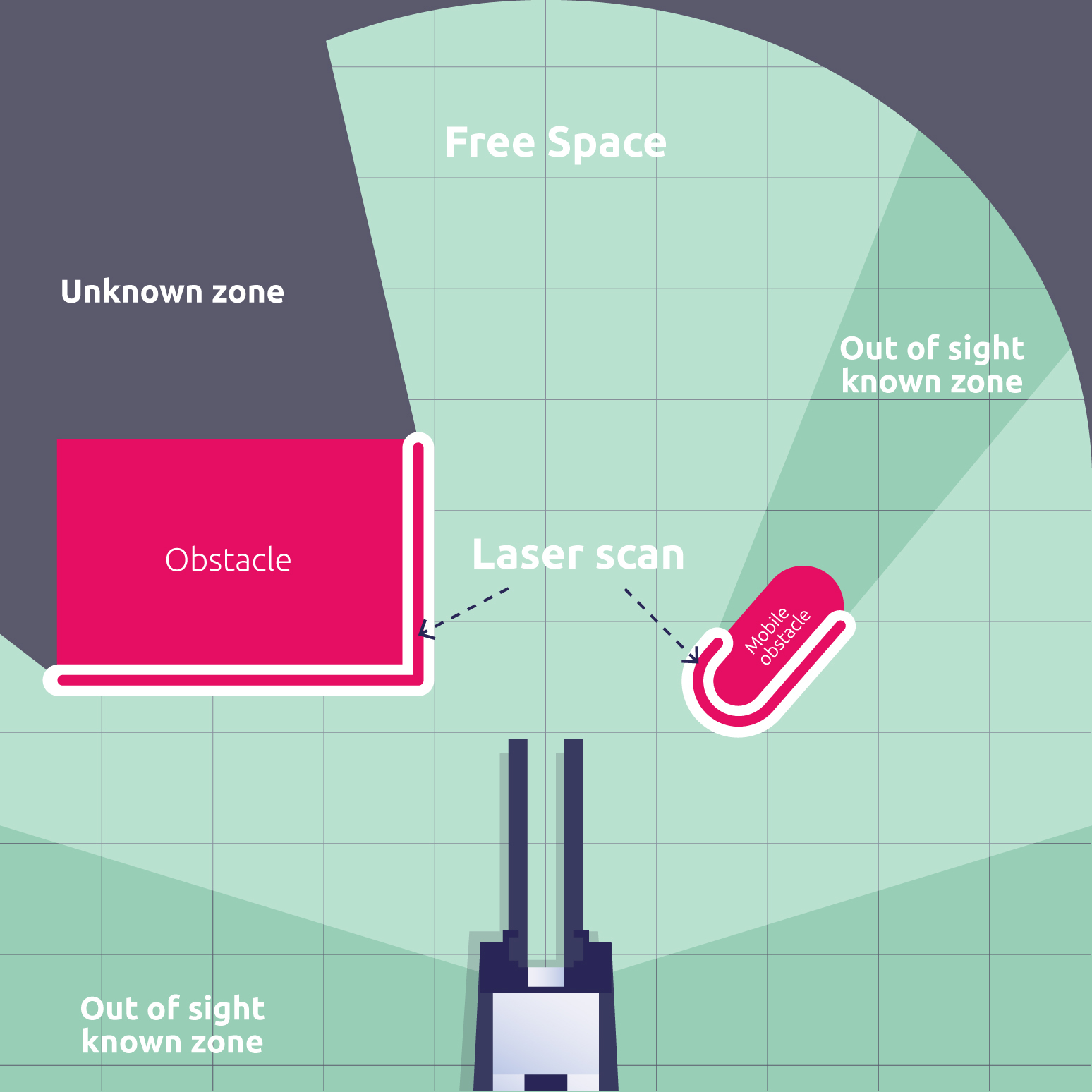

The BEV is an enriched representation of a navigable scene. All essential information to navigate through an environment is concentrated into one single output:

Freespace

Static obstacles

Dynamic obstacles

Laser Scan

and more valuable information (depth map, point cloud…)

On-Camera AI & On-Board AI

Whether your vision system relies on an embedded computing board or an all-in-one camera system, our software vision sensor is your solution.

Aloception can adapt to a variety of camera sensors (stereo-based) and hardware setups.

Integration

Tested on Linux, Aloception can be interfaced in your project using either UDP, ROS, custom protocol or directly from Python.

More about Aloception

Watch the capabilities of Aloception in various environments: